Nvidia isn’t just dominating the AI chip market, it’s starting to invest in ways to keep everyone else playing in its sandbox. On March 31, the company announced a $2 billion strategic investment in Marvell Technology, alongside a deep technical partnership that ties the smaller chipmaker’s custom silicon directly into Nvidia’s expanding AI infrastructure ecosystem.

The deal, which sent Marvell shares up more than 11 percent in early trading, isn’t framed as a traditional venture-style bet on a scrappy startup. Marvell is a seasoned public company with a market capitalization north of $80 billion, known for its work in data-center networking, storage, and custom accelerators. But the timing and structure speak volumes about where Nvidia sees the next phase of the AI hardware race heading: not pure monopoly, but controlled coexistence.

At the heart of the announcement is NVLink Fusion, Nvidia’s rack-scale platform designed to let customers build semi-custom AI clusters that mix Nvidia’s GPUs, LPUs, and networking gear with third-party accelerators. Marvell will contribute custom XPUs, its term for specialized processing units, and NVLink-compatible scale-up networking. Nvidia, in turn, supplies the supporting cast: its Vera CPU, ConnectX network interface cards, BlueField data processing units, Spectrum-X switches, and the full rack-scale AI compute stack. The two companies will also collaborate on silicon photonics and advanced optical interconnects, technologies critical for moving massive amounts of data efficiently inside AI factories.

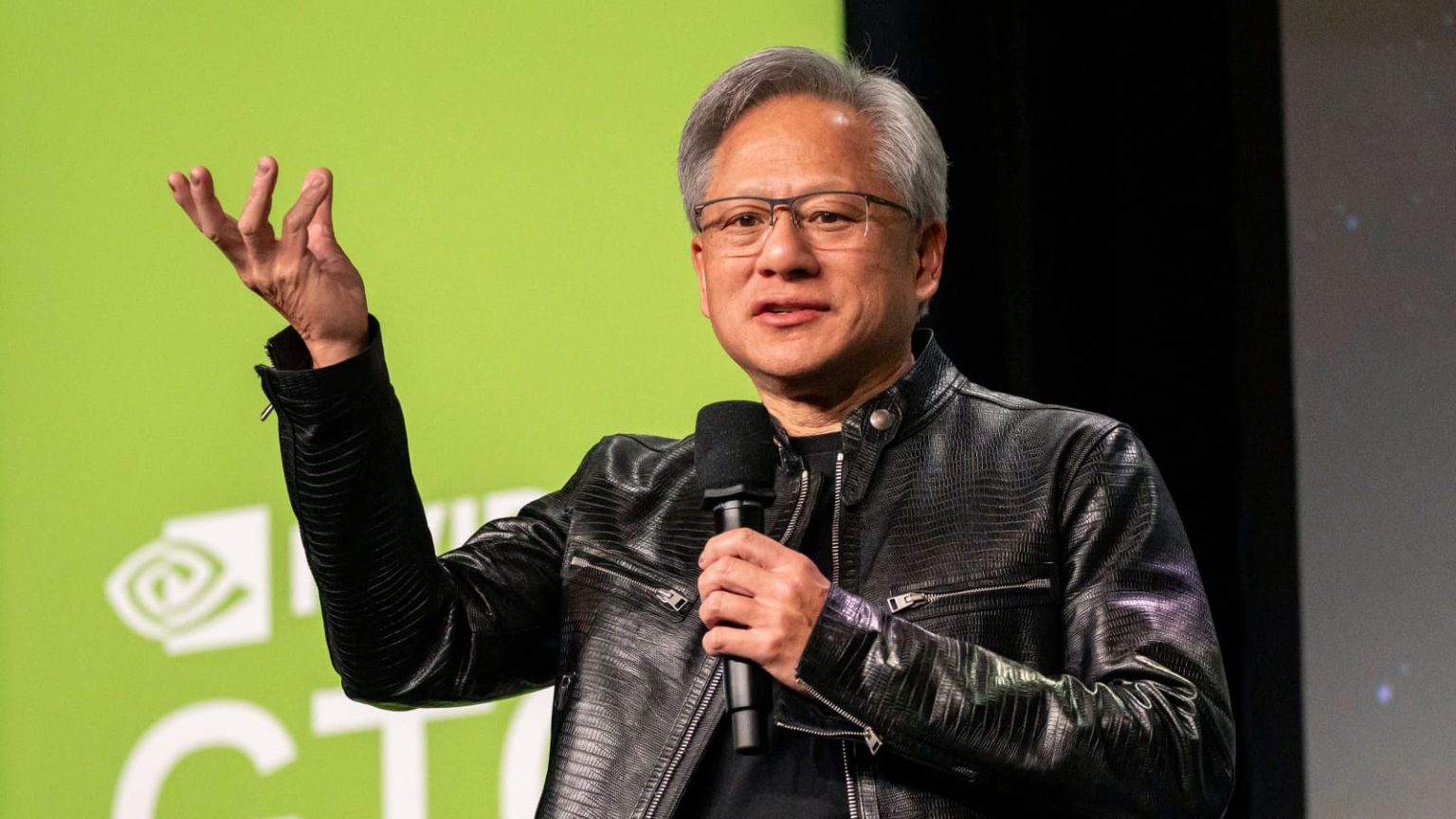

Jensen Huang, Nvidia’s founder and CEO, put it plainly in the joint statement: “The inference inflection has arrived. Token generation demand is surging, and the world is racing to build AI factories. Together with Marvell, we are enabling customers to leverage NVIDIA’s AI infrastructure ecosystem and scale to build specialized AI compute.”

Matt Murphy, Marvell’s chairman and CEO, echoed the sentiment, highlighting the growing need for high-speed connectivity and optical technology: “Our expanded partnership with NVIDIA reflects the growing importance of high-speed connectivity, optical interconnect and accelerated infrastructure in scaling AI. By connecting Marvell’s leadership in high-performance analog, optical DSP, silicon photonics and custom silicon to NVIDIA’s expanding AI ecosystem through NVLink Fusion, we are enabling customers to build scalable, efficient AI infrastructure.”

The subtext is hard to miss. For years, the biggest cloud providers, Amazon, Google, Microsoft, Meta, have poured billions into their own custom AI chips. Amazon has Trainium and Inferentia. Google has its TPUs. Microsoft is pushing Maia. These ASICs are built to run specific workloads at lower cost and higher efficiency than off-the-shelf Nvidia GPUs, especially for inference, where raw training horsepower matters less than steady, power-efficient token generation. The result has been a slow but noticeable erosion of Nvidia’s near-total dominance in the data-center AI market.

Rather than fight that trend head-on, Nvidia is doing what it does best: extending its software and interconnect moat so that even custom silicon still runs best inside an Nvidia-centric world. NVLink Fusion essentially lets hyperscalers and enterprises design their own accelerators while keeping the high-bandwidth, low-latency fabric that makes large-scale AI training and inference possible. It’s a pragmatic hedge. Customers get more choice and potentially lower costs. Nvidia keeps the ecosystem lock-in.

This isn’t Nvidia’s first such move. Earlier in March, the company made similar $2 billion investments in optical component makers Lumentum and Coherent, bets aimed at solving the same bandwidth and power bottlenecks that limit how big AI clusters can grow. Taken together, these moves suggest a deliberate strategy: Nvidia is betting that the future of AI infrastructure won’t be all-GPU or all-custom, but hybrid, and that the company best positioned to supply the glue (interconnects, networking, software) will win.

Marvell, for its part, brings real credibility to the table. It has been quietly building a custom-silicon business serving exactly the hyperscalers now looking for alternatives. Its long history in Ethernet, DSPs, and data infrastructure gives it the analog and optical expertise Nvidia needs as clusters scale into the hundreds of thousands of accelerators. The partnership also extends into AI-RAN, Nvidia’s push to turn telecom networks into AI infrastructure for 5G and 6G, another area where Marvell’s radio and networking chops could prove useful.

For investors, the signal is clear. Nvidia’s market cap already dwarfs most of the semiconductor industry combined, yet the company continues to find ways to expand its addressable market without diluting its core GPU business. Marvell gets an immediate credibility boost and a direct line into the world’s largest AI infrastructure projects. Wall Street’s reaction, Marvell shares jumping sharply while Nvidia ticked modestly higher, reflects that alignment.

Also read: Chinese Chipmakers Claim Nearly Half of Local AI Accelerator Market as Nvidia’s Lead Shrinks

What this doesn’t do is solve the broader supply-chain crunch or the eye-watering capital expenditure bills facing every company racing to deploy AI. Building these hybrid factories still requires massive upfront investment in power, cooling, and real estate. But it does lower one key barrier: the risk of betting on a single vendor. By making its ecosystem more permeable, Nvidia is effectively saying that the pie is growing fast enough that sharing a slice can still be highly profitable.

The inference wave Huang referenced is real. Large language models are moving from experimental demos to production workloads at hyperscale. Token generation, essentially the everyday operation of chatbots, recommendation engines, and autonomous agents, now dominates demand in many data centers. That shift favors efficiency over peak FLOPS, which is precisely where custom silicon shines. Nvidia’s latest move acknowledges that reality while ensuring its own technology remains the default foundation.

Whether this partnership delivers the promised flexibility at scale remains to be seen. Integration timelines weren’t disclosed, and real-world performance will depend on how well Marvell’s XPUs mesh with Nvidia’s full software stack. But the intent is unmistakable. In an industry where everyone is scrambling to build the next AI factory, Nvidia is positioning itself as the company that can supply not just the engines, but the entire assembly line, and invite others to bring their own specialized parts.

It’s a classic Nvidia play: turn potential competition into ecosystem expansion. And in the current AI gold rush, that might be the smartest $2 billion the company has spent all year.